// RU version: voice-agent.ru.md

// USER GUIDE

// TL;DR

An AI voice receptionist (Sophie) on Vapi for a home-service business: caller identification, knowledge-base lookup, appointment booking, reschedule and cancel, error escalation. Test case is GreenScape Landscaping (Saint Petersburg, FL) -- a fictional landscaping company.

Visitor access is read-only. The Vapi assistant has a US phone number bound but is kept unlisted -- the project ships as a portfolio artifact, not a live demo. Hear the agent on this page through the recorded-call player.

// HOW IT WORKS

An incoming call lands on the Vapi assistant. Sophie greets the caller -- the opening line lives in Vapi's firstMessage field and is proxied directly to TTS at call connect (no LLM round-trip), carrying the AI disclosure and the recording notice. While the greeting plays, an immediate phone lookup runs in parallel against the CRM so the result is ready by the time the caller finishes responding.

Sophie uses two tools. n8n_orchestrator is a single MCP tool that fans out into seven concrete operations -- client lookup, client creation, availability check, booking, appointment lookup, reschedule, cancel -- over the MCP protocol. The LLM picks the right operation by argument shape; the n8n side runs it against Supabase Postgres and Google Calendar. search_knowledge_base is a separate Vapi-native tool backed by Vapi Files (services, pricing, hours, FAQs, escalation policy).

After the call ends, Vapi posts an end-of-call report to a separate n8n webhook. That webhook persists the call (transcript, costs, structured outcome) into Postgres, writes a TCPA / wiretap audit row into consent_log, and fires a fire-and-forget archival of the recording into Supabase Storage.

Failure modes are covered in Errors; specific design decisions are listed in Key Patterns with cross-links to the full ADRs.

// WHAT'S IN SCOPE

Sophie's knowledge of the business is a fixed knowledge-base file (greenscape-company-info.txt) loaded into Vapi Files. Anything outside the KB is either declined or routed via callback offer.

| Capability | Coverage |

|---|

| KB lookups | Services, pricing ranges, business hours, service area cities, FAQs, escalation policy. Outside the KB → Sophie offers a callback rather than guessing. |

| Booking | New appointments, reschedule, cancel. Two-hour blocks within business hours, Eastern Time. Service-area address verification is collected but not actively checked — confirmed post-booking by the operations team (see Possible Improvements). |

| Caller identification | Auto-lookup by phone at call start (parallel with greeting). For action requests (booking / reschedule / cancel), Sophie collects email, looks up the CRM, and applies a secondary verification gate (last four digits of the phone on file) when phone-side matching wasn't possible. |

| Out of scope | Live transfers (callback offer instead). Tree / storm emergency (routed to a separate phone with explicit instructions, no CRM action). Pure information requests outside the KB → callback offer. |

Compliance. The opening line discloses the AI nature and that calls may be recorded for quality and training (FCC AI-voice ruling Feb 2024 + CIPA / two-party-consent state coverage). Each completed call writes a consent_log row capturing the disclosure text actually spoken, fetched live from Vapi assistant.firstMessage at end-of-call so the audit row reflects what the caller heard, independent of any later prompt edits.

// TECHNICAL REFERENCE

// STACK

| Layer | Technology | Role |

|---|

| Voice platform | Vapi | Hosts the assistant, system prompt, tool routing, end-of-call analysis pipeline, knowledge-base files |

| LLM | anthropic/claude-haiku-4-5-20251001 | Reasoning, tool calling, response generation. Haiku trades reasoning headroom for sub-second TTFT (time-to-first-token) -- the receptionist flow doesn't need Sonnet-level reasoning, voice latency wins |

| TTS | ElevenLabs eleven_flash_v2_5 | Speech synthesis. Direct ElevenLabs integration (not via Vapi voice provider), Flash chosen for low latency |

| STT | Deepgram flux-general-en | Speech-to-text. Flux has built-in end-of-turn detection, which supersedes Vapi's transcriptionEndpointingPlan -- smartEndpointingPlan is set to Off to avoid double-handling |

| Backend | n8n (self-hosted) | 12 workflows total -- MCP orchestrator + 7 Vapi-facing tool sub-workflows + 2 internal helpers (shared_phone_normalize, archive_recording) + end-of-call webhook + 1 error handler |

| CRM | Supabase (Postgres + Storage) | Four tables (customers, calls, appointments, consent_log) + private recordings bucket for archived audio |

| Schedule | Google Calendar | Slot availability checks, event create / update / delete |

| Alerts | Discord | Tool failures and external trigger failures funnel into a single error_handler workflow that posts to a Discord webhook |

// ARCHITECTURE

Twelve n8n workflows cooperate. orchestrator is the single Vapi-side entry — an MCP server that advertises seven concrete tool operations. end_of_call is a separate webhook that fires when Vapi finishes a call; it persists the call record, audits consent, and kicks off recording archival. archive_recording runs fire-and-forget from end_of_call to upload the .mp3 into Supabase Storage. error_handler catches unhandled failures across all of them and posts a Discord alert. Two internal helpers (shared_phone_normalize, archive_recording) are not exposed to Vapi — they are invoked via executeWorkflow from peers. Five most representative workflows are shown below; the other tool sub-workflows (create_client, check_availability, event_lookup, update_event, delete_event) follow the same patterns as client_lookup and book_event.

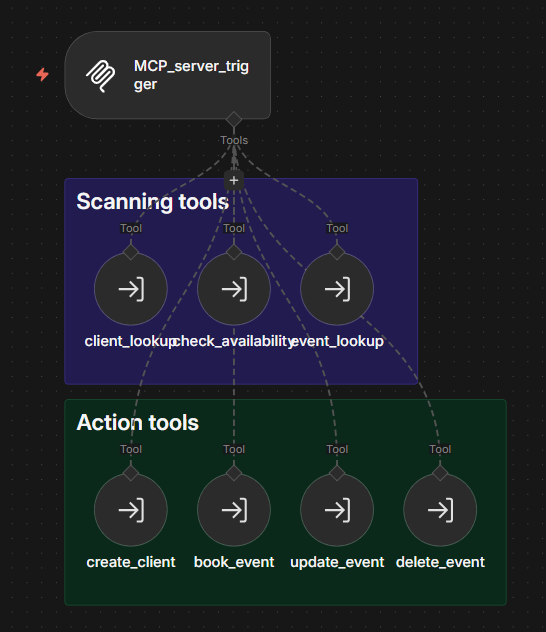

// ORCHESTRATOR -- the single Vapi-side tool. MCP server fans out to seven concrete operations.

MCP_server_trigger -- entry point for Vapi's n8n_orchestrator tool. Advertises the seven sub-workflows over the MCP protocol; Sophie's LLM picks one by argument shape- Scanning tools (read-only):

client_lookup, check_availability, event_lookup - Action tools (mutating):

create_client, book_event, update_event, delete_event

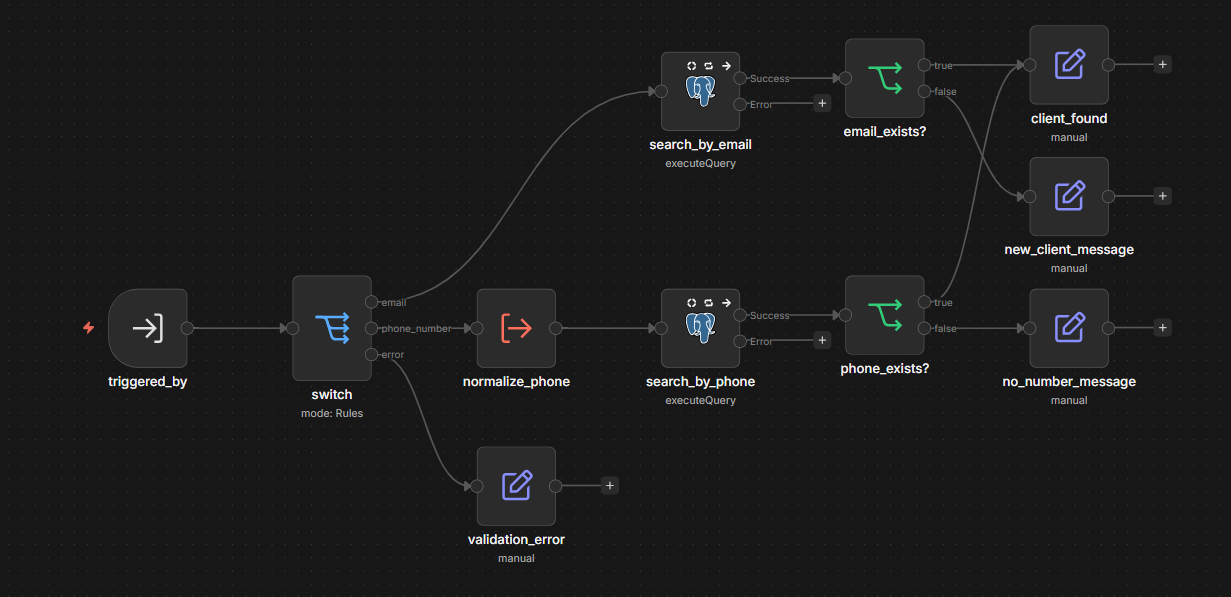

// CLIENT_LOOKUP -- a representative scanning tool. Look up a customer by email or phone, return CRM record + customer_id (UUID).

triggered_by -- invoked by orchestrator via the MCP toolWorkflow nodeswitch -- Rules mode, routes by which input is non-empty: email, phone_number, or fallthrough error- Email branch:

search_by_email (Postgres executeQuery) → email_exists? (IF) → client_found or new_client_message - Phone branch:

normalize_phone (executeWorkflow → shared_phone_normalize for E.164) → search_by_phone → phone_exists? → client_found or no_number_message validation_error -- fallback when neither input is provided; instructs Sophie to ask for either email or phone

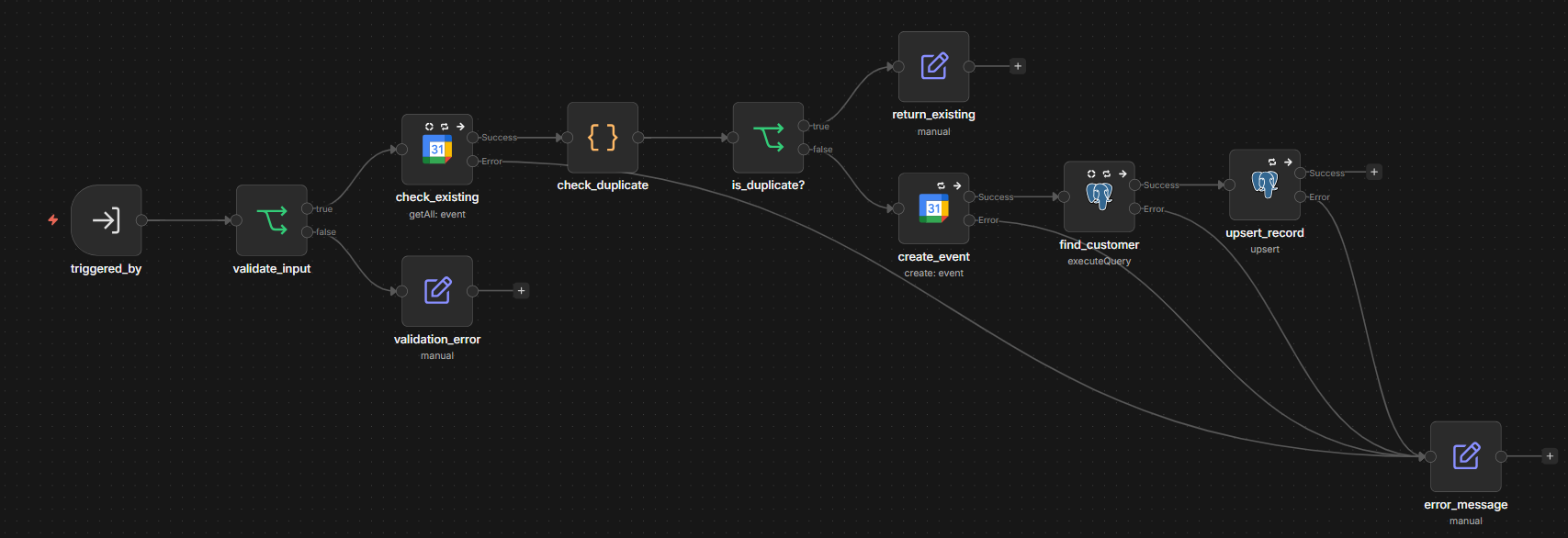

// BOOK_EVENT -- a representative action tool. Idempotent: a duplicate booking request returns the existing appointment_id, no new event is created.

validate_input -- IF gate on required fields (start_time, end_time, email, client_name, service_type, address)check_existing -- GCal getAll within start_time to start_time + 5 min with caller's email in attendeescheck_duplicate + is_duplicate? -- match analysis + IF branching- Duplicate match:

return_existing returns the existing appointment_id, no new event created (idempotency) - No match:

create_event (GCal create) → find_customer (Postgres lookup of customer_id by email) → upsert_record (Postgres upsert appointments ON CONFLICT gcal_event_id, status scheduled) error_message / validation_error -- common error sinks; return error instruction for Sophie

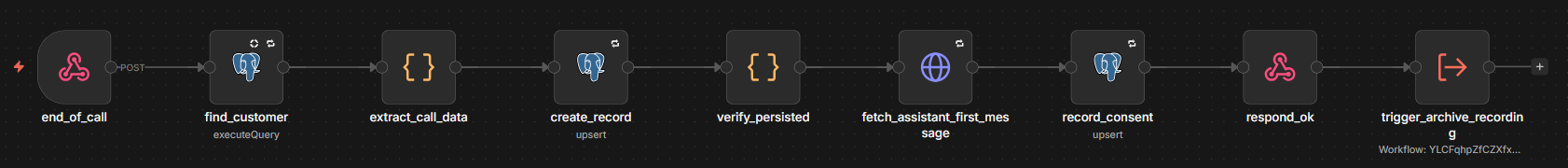

// END_OF_CALL -- runs after each Vapi call ends. Persists the call, audits consent, kicks off recording archival.

end_of_call -- Vapi webhook (POST) authenticated via Header Auth Bearer; rejected at HTTP layer if missing or wrongfind_customer -- Postgres executeQuery on customers by customer.number (E.164)extract_call_data -- Code node parses transcript, tool-call aggregates, costs, structured analysisPlan outputs (matched by configured UUIDs)create_record -- Postgres upsert on calls, ON CONFLICT (vapi_call_id) -- idempotent on retriesverify_persisted -- Code node asserting the upserted row has both id and vapi_call_id; throws otherwise so Vapi retriesfetch_assistant_first_message -- HTTP GET /assistant/{id} at Vapi (Bearer auth) so consent_log.disclosure_text reflects exactly what was spokenrecord_consent -- Postgres upsert on consent_log, ON CONFLICT (vapi_call_id, consent_type) -- TCPA / wiretap audit rowrespond_ok -- 200 to Vapitrigger_archive_recording -- fire-and-forget executeWorkflow into archive_recording

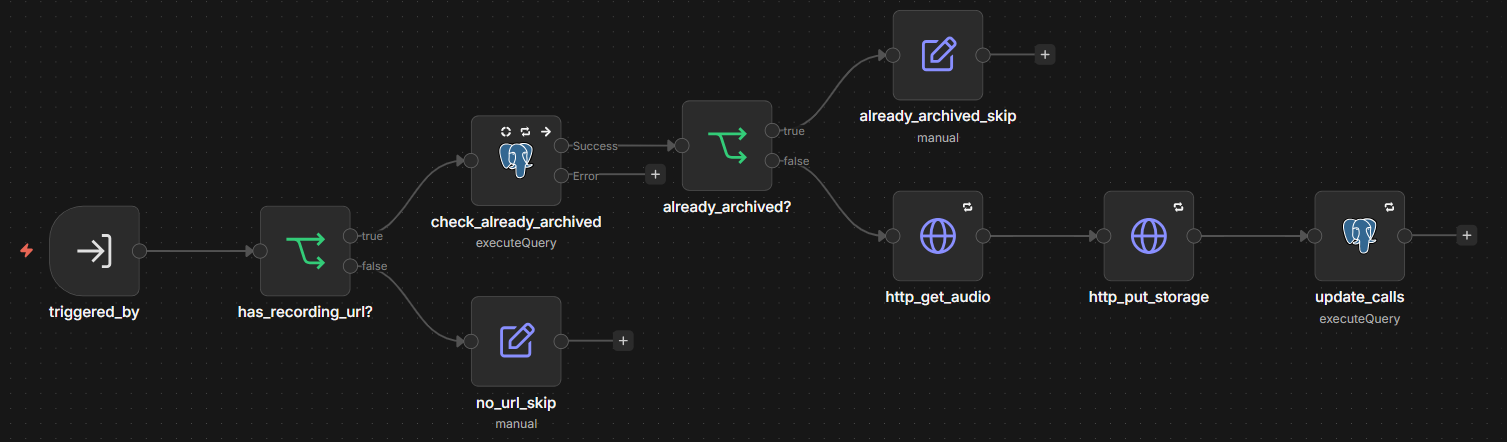

// ARCHIVE_RECORDING -- internal helper, runs fire-and-forget from end_of_call.

has_recording_url? -- guard: skip if Vapi returned no recording_urlcheck_already_archived -- Postgres executeQuery for an existing recording_archived_atalready_archived? -- guard: skip if already done (idempotent on retries)http_get_audio -- HTTP GET the .mp3 from Vapi recording_urlhttp_put_storage -- HTTP PUT to Supabase Storage bucket recordings with header x-upsert: true (idempotent)update_calls -- Postgres executeQuery to write back recording_storage_path / recording_size_bytes / recording_archived_at

// KEY PATTERNS

Architecture above describes what each workflow does. This section is about why it was built this way -- design decisions that are not obvious from the node list. ADR-backed decisions link to the full record; operational and compliance patterns without a dedicated ADR are documented inline.

- Single MCP-routed orchestrator instead of N Vapi tools. Registering each backend operation as its own Vapi tool would mean seven separate tool records to manage credentials, server URLs, headers, and JSON schemas for -- each adding to the token-leakage surface (Vapi management API returns

server.headers verbatim) and to the LLM's context cost on every turn. Instead, one Vapi tool of type: mcp points at the n8n orchestrator workflow, and the seven sub-tool descriptions are advertised dynamically over MCP discovery on connect. Adding an eighth operation is a toolWorkflow node in n8n -- no Vapi-side change, no credential rotation, no schema duplication. ADR-002. - Idempotency keyed by vendor IDs, with a Postgres partial UNIQUE for defence-in-depth. Two retry paths can produce duplicates: Vapi retries the

end-of-call-report on non-2xx responses, and Sophie's LLM occasionally double-fires book_event on confirmation. Using our own primary keys as conflict targets would be useless -- each retry generates a fresh UUID, no UNIQUE conflict raised. So conflict keys are values that always travel with the same physical event: vapi_call_id (Vapi assigns one per call lifecycle) for the calls upsert, and gcal_event_id (Google Calendar assigns one per created event) for appointments. A partial UNIQUE index on (customer_id, start_time) WHERE status IN ('scheduled', 'rescheduled') adds a second layer: even if the GCal pre-check returns stale data, two concurrent bookings for the same caller at the same minute collapse into one row via UNIQUE violation, and the partial WHERE clause leaves the slot free again once the row is cancelled / completed. ADR-003. - Comma-safe Postgres writes via the

upsert operation, not executeQuery. n8n's Postgres executeQuery does a literal comma-split on the resolved queryReplacement string after template interpolation -- a value containing a comma (an address like 123 Main St, Apt 5, a name like Smith, John, an entire transcript) becomes two parameters and the rest of the value is silently dropped. All multi-column writes use the upsert operation with explicit per-column expressions, which sends each value as its own bound parameter. executeQuery is reserved for SELECTs / UPDATEs with values guaranteed comma-free (single email, UUID, timestamp). ADR-005. - Error-instruction contract with a single centralised handler. Sub-workflows fail in two semantically different ways -- validation failure (recoverable, ask again) and runtime failure (not recoverable in this call). Throwing exceptions to Vapi would mean Sophie reasons over stack traces and might leak internals; returning raw error JSON has the same risk; silent retry creates long dead-air in a real-time voice call. Every sub-workflow returns the same shape on failure instead:

{ "error": true, "instruction": "<exact phrase Sophie should say>" }. Validation instructions are constructive ("ask the customer to provide a valid email"); runtime instructions are graceful-fallback ("apologize and offer a callback"). The actual exception (workflow name, the node that threw, NY-time timestamp, the first 500 characters of the error message, link to the execution) is posted to Discord by a single error_handler workflow, wired as errorWorkflow on every other workflow in the project -- one place to silence, one place to extend. ADR-004. - Bearer Auth Header on the

end_of_call webhook, not URL-as-secret. The original protection was an unguessable UUID path -- but the URL leaks through n8n executions, Vapi assistant config, browser DevTools, and any error alert that includes the execution link. Once leaked, rotation means retagging the path everywhere. The fix is HTTP-layer rejection: the webhook node is bound to a Header Auth credential storing Authorization: Bearer <secret>, and Vapi's assistant server.headers carries the matching value. Missing or wrong header → 403, no execution recorded, no Discord noise. Rotation is two clicks (n8n credential + Vapi header), no path retag. ADR-007. customer_id chained through Sophie's context + server-side ownership check on mutations. The original event_lookup read events directly from Google Calendar and let the LLM filter by matching the caller's email against attendees -- a prompt-injection attack could ask for "all appointments today" and see other callers' bookings before the filter ran. Now client_lookup / create_client return the customer_id (UUID), the system prompt instructs Sophie to remember it, and event_lookup requires it as a parameter -- filtering happens server-side in Postgres against customer_id = $1 AND status IN ('scheduled', 'rescheduled'). The same discipline applies to update_event / delete_event: a verify_ownership Postgres SELECT confirms the gcal_event_id belongs to the caller's customer_id before any GCal or Postgres mutation; on miss, the workflow returns "I couldn't find that appointment under your account. Could you confirm the date and time again?" rather than executing the mutation. Closes the impersonation gap where a caller knowing another customer's gcal_event_id could otherwise ask Sophie to cancel it. ADR-006.- Callback offer instead of live call transfer for out-of-scope requests. Vapi exposes

transferCall with multiple destinations, but the default voice-receptionist instinct of "transfer to a human" has three problems for this MVP: there is no actual human staffing the receiving end (GreenScape is a fictional test case), SIP / cold-transfer adds 2-5 s of latency and can fail silently, and warm-transfer requires dual-leg orchestration. Sophie instead offers a callback for commercial (over $25k), operations (billing / complaints / scheduling), or field (on-site) requests. Caller leaves with a definite expectation, the call ends in calls.outcome = 'callback_promised', and the agreed phone number lives in the transcript. ADR-001. - AI / recording disclosure lives in Vapi

firstMessage, and the audit row fetches it live. The opening line ("This is Sophie, your AI assistant -- calls may be recorded for quality and training") covers the FCC AI-voice ruling and CIPA / two-party-consent state laws. Putting it in the LLM-generated greeting would mean (a) one LLM round-trip of latency at call connect, (b) drift risk on every prompt edit, (c) no single audit-able source of "what the caller actually heard". Vapi's firstMessage field is proxied directly to TTS at call connect with no LLM call; the system prompt explicitly tells Sophie not to greet herself. At end-of-call, the fetch_assistant_first_message HTTP node calls GET /assistant/{id} on the Vapi API and the returned firstMessage is written into consent_log.disclosure_text -- so the audit row reflects exactly what was spoken on this call, even if the assistant's first-message field is edited later. - Caller secondary verification gate (last-four digits of phone on file). The original identification flow trusted email alone after the initial phone lookup -- anyone who knew a customer's email could be granted that customer's identity. The fix runs only on the branch where the immediate phone lookup at call start did NOT find a match (phone missing, templated, or unknown) but the later email lookup did: Sophie asks the caller to confirm the last four digits of the phone on file before treating them as that CRM customer. On mismatch or refusal she falls through to the new-client creation path. No backend change needed --

client_lookup already returns the phone in its response message, the comparison happens in Sophie's own context per a dedicated rule in the system prompt's Identification for Action section. - TTS-friendly prompt discipline, with technical formats only in tool arguments. Sophie speaks numbers as words ("nine a m to eleven a m", not "9-11 AM"), uses no markdown / lists / symbols ("everything is spoken aloud"), and stops mid-sentence on interruption. Dates require the full day-of-week + month + day + year confirmation gate before any booking / reschedule / cancel tool call ("So that is Tuesday, May fifth, two thousand twenty-six, correct?") -- the LLM cannot reliably derive day-of-week from a date string under voice latency. Tool arguments, by contrast, use the strict technical formats the backend expects: ISO 8601 timestamps with the

America/New_York offset, lowercased @-emails, E.164 phone numbers. The dual contract -- natural speech to caller, structured data to tools -- is enforced in the system prompt's Voice & Style Rules and Data Verification Standards sections. - Phone normalization via a shared

executeWorkflow sub-workflow. Both client_lookup and create_client need E.164 normalization. Duplicating the Code-node logic across two workflows guarantees future drift: one will be fixed, the other won't. The 2-node helper shared_phone_normalize -- executeWorkflowTrigger with inputs (phone_number, default_country), one Code node that strips non-digit / non-plus characters, prefixes +1 if missing or detects an existing 1-prefixed 11-digit US number, returns empty string when the final length is below ten digits -- is invoked via executeWorkflow from both peers. DRY without an n8n-side library. - CRM hygiene at the write boundary. All customer-facing data is normalized at the moment of write, not on read: emails are lowercased before the

upsert (the customers table has a UNIQUE functional index on LOWER(email) WHERE email IS NOT NULL so John@X.com and john@x.com collapse into one record); names are Title Case (per the system prompt's Data Verification Standards, plus an inline Title-Case expression in book_event.create_event.summary as a second line of defence); phone numbers are E.164 via the shared helper above; and a valid_phone? IF node in create_client guards against unresolved Liquid templates -- a phone value still containing {{ (when Vapi's customer.number wasn't bound to a real value) is cleared to NULL rather than written through as a malformed string. Email is regex-validated (^[^\s@]+@[^\s@]+\.[^\s@]+$) before the upsert; a regex miss routes to the error-instruction path. - Fire-and-forget recording archival, with idempotent guards.

respond_ok returns 200 to Vapi as soon as the calls row is persisted and the consent_log audit row is written -- Vapi sees a successful response before any audio upload starts. trigger_archive_recording uses executeWorkflow with waitForSubWorkflow: false, so the parent workflow doesn't await the sub-workflow's completion. Inside archive_recording, two IF guards keep retries idempotent: has_recording_url? skips if Vapi returned no recording (call ended before recording started, or recording disabled), and already_archived? skips if recording_archived_at is already set in the row. The PUT to Supabase Storage uses x-upsert: true, so even without the guards a retried upload would overwrite the same object rather than fail. analysisPlan structured-output drift detection + Vapi retry contract via verify_persisted. The extract_call_data Code node maps Vapi's analysisPlan outputs into typed columns (outcome, appointment_booked, call_category, customer_sentiment) by enumerating expected output names against the names Vapi actually sent. Full mismatch throws an explicit error naming the expected vs received names -- so a renamed Structured Output in the Vapi dashboard surfaces as a loud failure in Discord, not as silently NULL columns. Partial drift (some names present, some missing) logs a warning and continues with what mapped. A try / catch falls back to a minimal record so a partial Vapi payload still creates a row. After the create_record upsert, verify_persisted asserts the returned row carries both id and vapi_call_id; on miss it throws -- the n8n webhook returns non-2xx, Vapi retries, and the vapi_call_id UNIQUE constraint keeps the retry idempotent.- Layered RLS policies: demo

service_role + production owner_authenticated_read. Two layers cooperate on the same tables. The demo layer (migrations 00003 / 00008) grants service_role full access -- this is what n8n uses through the Supabase service-role JWT. The production layer (migration 00010) adds SELECT for authenticated users carrying a custom JWT claim user_role='owner' -- targeted at a future read-only owner dashboard. The two layers are additive: adding production policies doesn't constrain service_role, and anon has no policy on any operational table so unauthenticated access is rejected by default. The claim is deliberately named user_role rather than role -- auth.jwt()->>'role' is reserved by Supabase for the Postgres role (anon / authenticated / service_role) and a custom role claim would silently no-op. The production layer is currently inert (no JWT hook, no dashboard yet); flipping it on is a hook-config + frontend change, no migration needed. - GDPR Art.17 erasure via the

anonymize_customer SQL function, with a TCPA carve-out for consent_log. A SECURITY DEFINER plpgsql function in public schema redacts PII on customers / calls / appointments, deletes the corresponding recording .mp3 files from Supabase Storage, and marks customers.anonymized_at for audit. EXECUTE is revoked from PUBLIC / anon / authenticated and granted only to service_role, so even a misconfigured future dashboard policy cannot call it. The function is idempotent -- a second invocation returns {status: "already_anonymized"} without touching the row. The consent_log table is intentionally NOT redacted: TCPA + FCC implementing rules (47 USC §227) require retaining written consent records for at least four years, and GDPR Art.17(3)(b) explicitly carves out retention obligated by Union or Member State law -- TCPA is the US analogue of that carve-out. The function returns a jsonb summary (calls_redacted, recordings_deleted, appointments_redacted, consent_log_retained: true) so the caller has an audit-shaped result without scraping diagnostics.

Numeric parameters (model versions, retry counts, recording cap, retention windows) -- see Limits & Timeouts.

// LIMITS & TIMEOUTS

| LLM | Claude Haiku 4.5 (claude-haiku-4-5-20251001) -- sub-second TTFT for voice; maxTokens: 250 per response to keep voice turns short |

| TTS | ElevenLabs Flash v2.5, voice id g6xIsTj2HwM6VR4iXFCw -- chosen for low latency over richer voice models |

| STT | Deepgram Flux General English -- built-in end-of-turn detection supersedes Vapi's transcriptionEndpointingPlan (Vapi's smartEndpointingPlan is set to Off to avoid double-handling) |

| Booking blocks | Two-hour windows within business hours, Eastern Time (America/New_York); start_time must be at least one hour in the future; past times never booked |

| Service area | 35-mile radius around Saint Petersburg, FL -- collected at booking, confirmed post-booking by the operations team (see Possible Improvements) |

| Project minimum | $500 residential; commercial (>$25k) is callback-only |

| n8n HTTP retries | retryOnFail: true with waitBetweenTries: 3000 ms on every external-API node (Postgres, Google Calendar, Vapi API). Postgres writes use onError: continueErrorOutput to route failures into the error-instruction path; reads use alwaysOutputData: true so an empty result still passes to the next IF rather than failing the branch |

| Discord error truncation | 500 characters max per error message; longer messages get a ... [truncated] suffix to keep Discord webhook payloads compact |

| Recording cap | 50 MB per file in the Supabase Storage recordings bucket; ~3-6 MB per typical call → about 200 calls before the free-tier 1 GB total storage limit needs cleanup |

| Recording archival | Fire-and-forget from end_of_call; x-upsert: true header on the Supabase PUT so a retried upload overwrites rather than fails; idempotent guard via recording_archived_at column |

consent_log retention | At least four years from recorded_at per TCPA + FCC implementing rules (47 USC §227); intentionally retained through GDPR Art.17 erasure (carved out by Art.17(3)(b)) |

| Eval suite cost | ~$0.10-0.20 per full run (5 chat-mode cases × ~10-15 turns × Haiku 4.5 + Vapi tester AI + LLM judge); manual cadence (one run per major prompt edit), not per-commit CI |

// ERRORS

Server-side. Every workflow in the project is wired to the centralised error_handler via the errorWorkflow setting. On any unhandled error the handler fires a Discord message: workflow name, the node that threw, NY-time timestamp, the first 500 characters of the error message, and a link to the specific execution. Postgres write nodes additionally set onError: continueErrorOutput so a transient API failure routes into the error_message node and returns the standard error-instruction shape to Sophie, rather than throwing and ending the call abruptly.

Per-tool error instructions. Sophie speaks these phrases verbatim when a sub-workflow returns {error: true, instruction: "..."}.

| Failure | Instruction Sophie says |

|---|

client_lookup -- neither email nor phone | "Ask the customer to provide either their email address or phone number so you can look them up." |

create_client -- validation | "Ask the customer to spell their full name and provide a valid email address." |

create_client -- runtime | "Apologize for the difficulty creating the account. Offer to have someone call the customer back." |

book_event -- validation | "Ask the customer to provide their name, email address, and preferred appointment time." |

book_event -- runtime | "Apologize for the difficulty booking the appointment. Offer to have someone call the customer back." |

check_availability -- runtime | "Apologize for the difficulty checking the schedule. Offer to have someone call the customer back." |

event_lookup -- runtime | "Apologize for the difficulty looking up appointments. Offer to have someone call the customer back." |

update_event -- ownership mismatch | "I couldn't find that appointment under your account. Could you confirm the date and time again?" |

update_event -- runtime | "Apologize for the difficulty updating the appointment. Offer to have someone call the customer back." |

delete_event -- ownership mismatch | "I couldn't find that appointment under your account. Could you confirm the date and time again?" |

delete_event -- runtime | "Apologize for the difficulty canceling the appointment. Offer to have someone call the customer back." |

Caller-side fallbacks. Unclear input → Sophie asks for clarification up to two times, then offers a callback. Caller silent (not counting tool-execution silence) → "Are you still there?" then ends the call politely. Caller disputes system info → Sophie apologizes and offers a manager callback, never argues. Wrong-number caller → polite goodbye, end call.

// POSSIBLE IMPROVEMENTS

Capabilities within reach but not currently implemented -- documented here so the gap between "what the platform supports" and "what this MVP does today" is explicit.

- Service-area address verification via geocoding. Today Sophie collects the address as-is and operations confirms it post-booking. The earlier prompt-driven approach (Sophie reading a city list from the KB and judging distance against it) produced flaky results: false negatives on in-radius addresses not literally listed (e.g. Apollo Beach FL -- inside the 35-mile circle but absent from the KB enumeration), and false positives on ambiguous city names (e.g. "Sun City" -- could be Tampa-area Sun City Center or Sun City California, 2,500 miles away). Production fix: a dedicated

verify_address_in_service_area n8n sub-workflow that calls Google Maps Distance Matrix API with the property address and the business HQ, returns boolean in_radius based on a 35-mile threshold. - HMAC signature on the

end_of_call webhook. The current Bearer Auth header is static and replayable; an intercepted request can be re-sent. The industry-standard fix is an X-Vapi-Signature header carrying an HMAC of the payload + timestamp, validated server-side. Vapi does not yet publish the exact X-Vapi-Signature format -- header name, payload-to-sign, separator, hex vs base64; community threads confirm intermittent missing-header bugs. Adoption waits on Vapi's spec or empirical reverse-engineering. - n8n executions data redaction. The Bearer header is captured into

body.headers.authorization of every saved end_of_call execution. Visible to anyone with n8n access (single user -- owner -- in the current setup). The n8n enterprise-tier "Redact production execution data" toggle would solve this; community edition cannot. Revisit on production deploy via EXECUTIONS_DATA_SAVE_ON_SUCCESS=none or per-workflow "do not save" toggle. - Outbound callback automation. Sophie's callback offer is currently a verbal promise -- nobody is actually called back automatically. Acceptable for an MVP without a real customer base; production needs a workflow that consumes

calls.outcome = 'callback_promised' + the agreed phone from the transcript, queues a task in the appropriate team's system, and applies an SLA per category (commercial / operations / field). - Owner dashboard + signed-URL playback for recordings. The production RLS layer (migration 00010) is in place but inert until two pieces ship: (a) a Supabase Auth Custom Access Token Hook that injects

user_role='owner' into the JWT, and (b) a read-only owner-dashboard frontend that authenticates and calls PostgREST. Recording playback should go through a Supabase Edge Function that mints a short-lived signed URL using the service_role key -- owners never receive the bucket key directly. vapi_metadata column cleanup. The calls.vapi_metadata jsonb column stores Vapi's full raw payload as a backup during the early phase of the project, useful for debugging schema drift in analysisPlan outputs. Once the schema stabilises, the column should be dropped -- nothing queries it and it bloats the row.archive_recording.update_calls migration to upsert. The current node uses executeQuery with three comma-joined parameters (vapi_call_id, file size integer, vapi_call_id again). All values are currently comma-free, but the pattern violates ADR-005's discipline; a follow-up should migrate it to the upsert operation or to $1 / $2 / $3 parameterisation.- Per-assistant authentication if multi-assistant. The current Bearer secret is shared across all assistants that might hit the n8n

end_of_call endpoint. If a second voice assistant is bound to the same n8n instance, both will share the same Bearer. Splitting per-assistant means a credential per assistant plus a small router upstream of end_of_call. - Voice-mode tests in

eval/. The current suite runs in chat mode -- text-only, no real call cost. TTS pronunciation of numbers, STT robustness under accents, latency / interruption handling are not exercised. Voice mode costs $0.20-0.50 per case and tests platform-specific rendering rather than prompt logic. Add when a staging assistant + isolated n8n + isolated Supabase exist. - Mutating happy-path tests. A booking-success test would create a real Google Calendar event + Postgres rows; cleaning up GCal automatically isn't wired yet. Out of scope until a staging Vapi assistant + isolated n8n project + isolated Supabase project make mutating tests safe.

- CI gating for evals. No GitHub Actions integration -- every run hits production Vapi + n8n + Supabase, and a missing staging environment makes per-PR auto-runs costly and noisy. Manual cadence (one run per major prompt edit) is sufficient at this stage.

// TEST DATA & EVALUATION

The Vapi assistant is regression-checked through a chat-mode test suite hosted on Vapi (projects/voice-agent/eval/). Five smoke scenarios target the load-bearing prompt rules; each runs a tester AI against the assistant in chat mode, and an LLM-as-judge grades the transcript against a binary PASS / FAIL rubric.

| ID | Asserts |

|---|

| CS-1 | Always collect email first -- Sophie does not invoke booking tools before email is provided and confirmed |

| CS-2 | ALWAYS call search_knowledge_base before answering pricing -- Sophie hits the KB before quoting figures; quoted figures match KB ranges |

| CS-3 | For ambiguous service-area queries, offer callback -- Sophie checks KB for Naples FL, answers honestly (outside service area), does not start booking |

| CS-4 | Emergency routing -- on tree / storm emergency, Sophie produces the emergency phone (727-555-0173, press 2) and ends the call without entering booking flow |

| CS-5 | Out-of-scope commercial handoff -- Sophie identifies a $50k office-park request as commercial, mentions the commercial team, offers callback with a contact-confirmation gate |

Latest run: 2026-05-07, 5 / 5 PASS after a polish-pass for three behaviours flagged in run 1 (email-dot preservation, callback-without-contact gate, emergency phone repetition). Suite source: suite-definition.json, runner: run-suite.ps1. Vapi has announced deprecation of Test Suites in favour of Simulations -- suite definitions carry over, scripts will be ported when Vapi publishes the migration guide.

Future expansion: voice-mode tests for TTS / STT robustness, mutating happy-path tests against a staging environment, and CI gating per pull request -- all blocked on a staging Vapi + isolated n8n + isolated Supabase setup. See Possible Improvements.